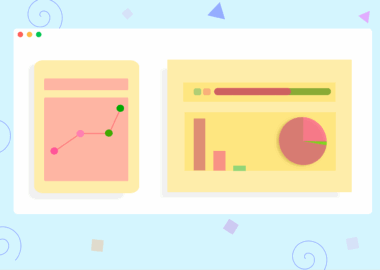

Key Metrics to Track in Predictive Analytics

Predictive analytics is a vital aspect of business that utilizes statistical algorithms and machine learning techniques to identify the likelihood of future outcomes based on historical data. One crucial metric to track is the accuracy of predictions. Accuracy measures how often a model’s predictions are correct compared to actual outcomes. High accuracy indicates reliable models. Another essential metric is precision, which focuses on the ratio of true positive predictions to the total positive predictions made. It speaks to the model’s ability to minimize false positives. Similarly, recall is a metric that quantifies the ratio of true positive predictions to the total actual positives. Balancing precision and recall can be challenging but necessary for optimal predictive performance. The F1 score, a combination of precision and recall, provides a single metric to maintain this balance. Ultimately, understanding these metrics can help businesses leverage predictive analytics effectively. By continuously monitoring and adjusting these metrics, organizations can improve their forecasting capabilities and gain a competitive edge in the marketplace.

Another important area of focus in predictive analytics is the concept of AUC, or area under the curve. This metric evaluates the performance of binary classification models. It represents the degree of separability achieved by the model between positive and negative classes. A model with an AUC of 0.5 is akin to random guessing, while 1.0 indicates perfect separation. Businesses should strive for AUC values close to 1.0 for effective decision-making. The confusion matrix is another valuable tool; it summarizes the performance of a classification model by evaluating the true positives, false positives, true negatives, and false negatives. From this matrix, businesses can derive metrics like specificity, which measures the proportion of actual negatives correctly identified. Moreover, tracking the model’s lift is crucial for assessing its effectiveness in terms of identifying positive responses compared to random guessing. Lift can significantly impact marketing strategies by highlighting campaigns that yield higher returns. Lastly, analyzing the Kolmogorov-Smirnov statistic provides insights into how well the model differentiates between classes, enabling businesses to optimize predictive models accurately.

Understanding Model Validation

Effective model validation is another key aspect of predictive analytics. This involves assessing the model’s performance on different subsets of data to ascertain its predictive power. Cross-validation is a common approach, where the dataset is divided into several parts. The model is trained on some parts and tested on others, which enhances the model’s reliability. Monitoring overfitting is crucial during this process, as overfitted models may perform excellently on training data but poorly on unseen data. Moreover, businesses should focus on measuring the mean absolute error (MAE), which quantifies the average magnitude of errors in a set of predictions. This provides insights into model accuracy while being more robust to outliers compared to other metrics. Similarly, examining the mean squared error (MSE) helps evaluate how closely a model’s predictions match the actual results. Businesses must prioritize examining these metrics continuously over time. Therefore, continuous feedback loops become essential in refining models to accommodate changing trends in data, thus enhancing predictive accuracy for long-term strategic benefits.

Monitoring deployment metrics is also essential for assessing the ongoing performance of predictive models. Businesses should evaluate the usage statistics of the model to ensure it meets its intended goals. This includes tracking the frequency of model predictions, the volume of users interacting with it, and even the timing of predictions made. Comprehensive reporting will help gauge user trust in the insights provided. In addition, analyzing feedback from stakeholders or end-users can identify areas for improvement. Statistical significance testing can come in handy, ensuring determined model changes based on substantial evidence rather than conjecture. Furthermore, sensitivity analysis is valuable for understanding how changes in input variables can influence model output, allowing businesses to identify critical variables that drive predictions. By maintaining an agile approach to model performance through methodical tracking of deployment metrics, companies can adapt to market changes swiftly. Regular performance assessments will equip organizations to refine their predictive analytics strategies more effectively, ultimately leading to greater business success and informed decision-making.

Integrating Data Quality Measures

The integration of data quality measures is paramount in improving the robustness of predictive analytics. Data accuracy, completeness, consistency, and timeliness should be continually assessed to optimize predictive modeling. High-quality data is essential, as flawed data can lead to misguided predictions. Businesses should implement data screening processes to rectify inaccuracies. The use of data profiling tools is advisable, enabling organizations to examine the quality of their data through various metrics. Additionally, effective data governance policies safeguard data integrity while enhancing overall reliability. Another valuable approach involves focusing on the data lineage, or tracking how data flows from origin to destination, ensuring data remains consistent through transformations. Post-processing data can also be beneficial; this implies reallocating and adjusting data to clean up any inconsistencies or errors detected during analyses. Regular audits serve to ensure compliance with quality metrics within the organization. Consequently, organizations committed to maintaining stringent data quality measures will find an improvement in the accuracy and reliability of their predictive analytics endeavors, leading to superior business outcomes.

Moreover, the significance of user engagement metrics cannot be overlooked in predictive analytics. These metrics help gauge how users interact with the output generated by predictive models. Examining engagement metrics such as click-through rates and conversion rates post-prediction usage helps understand the models’ tangible impact on user behavior. Enhancing user engagement through personalized predictive insights can significantly increase the model’s value. Therefore, organizations should incorporate user feedback mechanisms to collect qualitative data, influencing future model training processes. Tools such as surveys and A/B testing allow businesses to assess what engages their audience, refining their predictive strategies. Tracking customer satisfaction through metrics such as Net Promoter Score (NPS) can provide insight into loyalty and long-term engagement trends. Establishing a continuous loop of gathering user feedback not only boosts the effectiveness of predictive analytics but fosters a greater sense of trust among users. To maximize the potential of predictive analytics, businesses must remain attentive to user engagement metrics and invest in creating tailored experiences that enhance user satisfaction, reflecting overall business objectives.

Leveraging Automation in Predictive Analytics

Finally, leveraging automation within predictive analytics processes can speed up model iterations and enhance accuracy and reliability. Businesses can utilize machine learning algorithms to automate the process of data collection, cleaning, and modeling, significantly reducing manual workload. Back-testing models against historical data becomes more accessible with automation. Consequently, organizations can identify which models best capture the underlying patterns in their data. Additionally, automating the evaluation of performance metrics ensures organizations stay responsive to model changes over time. Relying on automated tools can facilitate rapid adjustments, creating an agile predictive environment. Cloud computing solutions enable businesses to easily scale their analytics capabilities. Integrating automation with predictive analytics allows organizations to adapt to increasing data volumes seamlessly. Ongoing integration of automated systems ensures teams spend more time interpreting insights rather than various manual processes. Emphasizing a data-driven culture enriched with automation can significantly nurture an organization’s predictive analytics journey. By embracing these technological advancements, organizations can enhance their decision-making process, driving growth and improved business outcomes for the future.

In summary, effectively tracking key metrics in predictive analytics is essential for businesses aiming to leverage data-driven insights. By focusing on accuracy, precision, recall, AUC, and other relevant metrics, organizations can genuinely assess the performance of their predictive models. Continuous model validation and deployment monitoring are also crucial in aligning models with shifting business needs. Quality data integration and user engagement metrics enhance predictive reliability, while automation streamlines processes, allowing organizations to optimize their predictive strategies. The fusion of these elements ultimately breeds superior predictive analytics capabilities, fostering informed decision-making and growth. With careful attention to these key metrics, businesses can unlock the potential of predictive analytics, driving innovation and reigniting competitive advantage in their industries.